The Unseen Revolution: Computer vision & deskless worker symbiosis

How computer vision’s rise will impact deskless workers

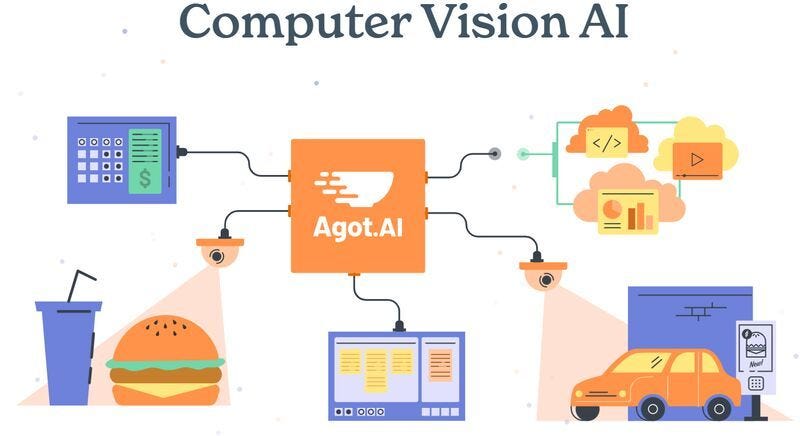

Computer vision is poised to transform daily operations for businesses in ways not seen since the advent of the spreadsheet. With cameras cheaper than ever and advances in AI, a new wave of apps leveraging computer vision promise to optimize everything from inventory to quality control.

Cameras have gone from hundreds of dollars to tens of dollars. As a result, business owners can now afford to fully equip facilities with cameras. At the same time, public acceptance of cameras in public life has steadily grown. But all this camera data has largely gone underutilized because computer vision adoption has seen limited use in business and talent environments

With the rise of large multi-modal AI models, companies can finally start exploiting this data to improve their business operations. A restaurant can monitor when to bus a table or refill a drink. A retail chain can track real-time inventory levels and shrinkage. And this is just the beginning. With future advances in computer vision and augmented reality, employees may be able to take on new tasks without training and build or repair items faster than ever before.

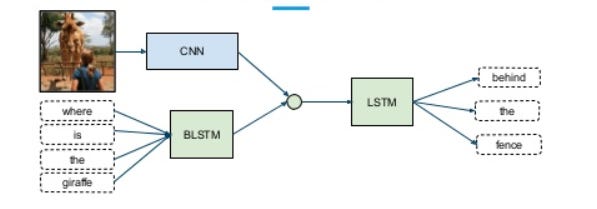

How Computer Vision Apps Work

At their core, many computer vision apps share a similar structure: Cameras capture images and video -> AI models parse events and insights -> Apps output actions or alerts. These systems are more than just a camera connected to PyTorch, though.

CV Applications need to build out core components:

Cameras to capture data

Clean data pipelines

Models trained on relevant data

Actionable insights and alerts

Intuitive user interfaces

Integrations with relevant inventory, order, and HR systems

Fixed or mobile camera choices also affect complexity. CV systems can leverage fixed or mobile cameras. Companies that use mobile cameras (robots or drones) need to build out navigation, safety, collaboration, and human interaction patterns. If a robot is used, how the robot looks when it interacts with a person is a big component (especially if the robot is used around customers). Does the robot have a display? What type of face does it show?

With the right training data and algorithms, these apps can tell workers what to do next or alert managers about risks. Paired with augmented reality devices, the apps can even guide workers through unfamiliar tasks.

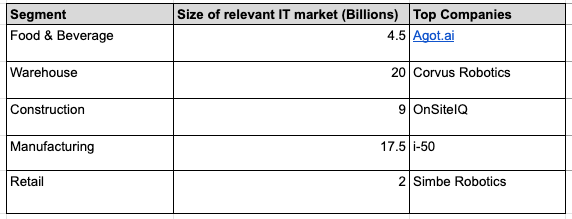

Relevant markets and market sizes

Use cases are wide ranging, spanning sectors like food service, manufacturing, warehousing, retail, and more. For example, food chains can ensure consistency and cleanliness across franchises. Manufacturers can automate quality control and assembly tasks. Energy firms can identify leaks, spills or unsafe conditions quickly.

What are these systems replacing

In most of these situations, similar information today would come from human observation or inference. Managers can walk facilities to monitor conditions, customers can provide reports on cleanliness, and workers can flag issues (like in Toyota’s kanban system). In some cases, computer systems can calculate the difference between customer orders and inventory orders to understand waste and usage.

These alternatives are inexpensive, require no process changes, and they aren’t measured on their efficacy. New solutions will provide more regular, more accurate information, but also require up front investment in money and process changes.

Go-to-market: more than just tech

For startups in this space, choosing the right market is essential. Factors like market size, structure, and data requirements will define the pace of growth. A company selling to governments, for instance, must navigate political landscapes and budget cycles—a stark contrast to the burgeoning but still-nascent robotics market.

Enterprise buyers will benefit the most

Enterprises—whether they are quick-service restaurants, third-party logistics providers, or manufacturers—are likely to be the biggest buyers. With potential contracts ranging from tens to hundreds of millions of dollars, this is a market defined by high-stakes sales cycles, but with enormous opportunities for customer retention and expansion.

Enterprise buyers have the financial incentives and wherewithal to adopt and integrate computer vision systems. Cost savings for buyers might shave off basis points on overall EBITDA, but with large, public companies that is meaningful.

Staying competitive

In the enterprise space, the easier something is to integrate, the easier it is to replace. With foundational technologies available as open-source, the competitive advantage lies in customization, scale, and integration. If the solution provides value, the barriers to starting are low, and there is no integration friction, the number of supplier entrants will grow, reducing any profits in the space.

Deeply integrated solutions, though more difficult to sell, are also more difficult to replace.

These systems will impact how people work

For employees, these systems present a dual-sided future—both utopian and dystopian. On one hand, these tools serve as virtual co-pilots, accelerating onboarding, enhancing productivity, and solving customer issues. On the other hand, they offer a lens into worker efficiency and value, enabling rewards for good performance or, conversely, facilitating employee replacement. Incentives like spot bonuses, expedited payments, and promotions could rely on data from these systems.

For managers, these technologies can integrate with Human Resource Information Systems (HRIS) and talent management platforms. These new data points offers actionable insights for organizational design and workforce planning, empowering leaders to make data-driven staffing decisions based on projected demand.

A tipping point for business productivity and new HR data

As sensor prices fall, machine learning vision models improve, robots become cheaper, and software integrations easier, we are going to see more productivity systems powered by computer vision.

These systems will give new, comprehensive views on worker and business productivity. Optimistically, co-pilot systems can help deskless workers get recognized more frequently and faster. These systems become another data point for organizations to understand how talent drives their business results.